OpenAI IPO Dreams Mask Structural Dependency Risk

Platform dependence on one partner compresses margins, caps power, and turns a hyped IPO into a valuation trap.

Global Risk & Market Intelligence

Global Risk & Market Intelligence

Platform dependence on one partner compresses margins, caps power, and turns a hyped IPO into a valuation trap.

Dependence on one partner exposes pricing power illusion and embeds structural margin pressure ahead of public market scrutiny.

Pulling back from Nvidia exposes unsustainable compute costs and signals margin compression ahead of IPO optics.

The Groq licensing deal signals defensive positioning, not dominance, and invites regulatory pressure that threatens margins and valuation multiples.

New AI models threaten entrenched chip economics, risking margin compression and a valuation trap across semiconductor leaders.

Projected 75 and 280 percent upside reads like momentum chasing, not durable cash flow expansion under scrutiny.

Soft skills get rebranded as strategy while boards ignore quantifiable return and invite valuation trap dynamics.

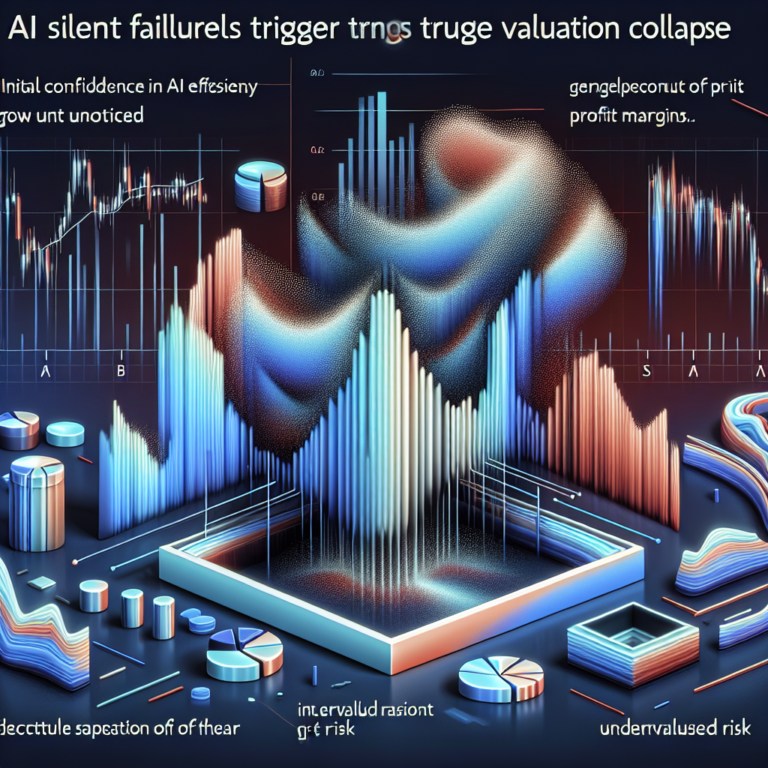

Productivity gains hide longer hours, signaling margin pressure and impending valuation trap across software firms.

Undetected AI errors compound into EBITDA erosion, exposing fragile governance and inflating downside risk across enterprise valuations.

State controlled AI regimes threaten valuation floors, undermine governance, and accelerate capital flight from exposed tech ecosystems.